We all have that one prideful friend that just can’t admit they were wrong. But is it truly just pride?

Research suggests that our ability to recall past predictions we’ve done may not be as good as we would like to believe [1][2]. So maybe your friend is not simply unable to admit they have mistakenly thought something would happen. Instead, he or she might be unable to remember ever making the mistake.

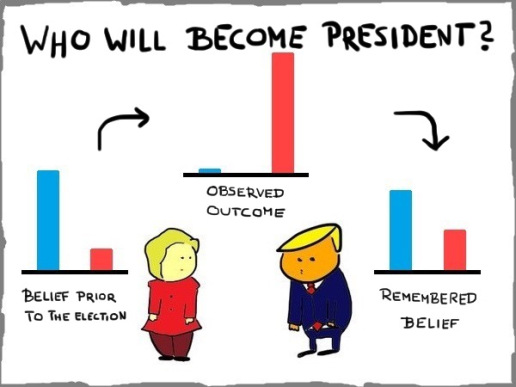

That is the so-called hindsight bias, the inability to reconstruct previous forecasts reliably after an outcome becomes known. It is a pervasive force that hinders self-evaluation, may cause over-confidence and damages effectiveness of agents in several fields that depend upon their ability to predict outcomes and – maybe more importantly – be aware of their limitations in doing so.

The beginning of the seventies was a very interesting time for the study of biases and heuristics, and that’s when our story starts. It was in the Amos Tversky and Daniel Kahneman’s research program in Israel that a young student by the name of Baruch Fischhoff came across Paul Meehl’s “Why I do not attend case conferences” [3]. About Meehl’s work, Fischhoff wrote in 2007:

“One of his many insights concerned clinicians’ exaggerated feeling of having known all along how cases were going to turn out.” [4]

It’s easy to see how this attitude can be damaging. Health professionals that underestimate how much they didn’t know before an outcome occurred are more likely to be over-confident in their predictive skills. This could lead, in turn, to overly drastic – or the lack of – measures for a given patient profile. Take, for instance, an over-confident doctor that has a hunch that one of their patients does not have cancer and therefore refrains from requiring certain exams that would usually be recommended for a patient with such symptoms. Clearly, if the patient does have the disease (and even if not), the doctor was irresponsible by putting intuition before evidence.

For the graduate students attending said research program, finding a new bias or heuristic meant sealing their names in the history of the early developments of the field, and this reading planted a seed in Fischhoff’s mind. So, he proceeded with constructing an experiment with Ruth Beyth [1], where, in the first part, participants were asked to make some predictions about (that is, evaluate the probabilities of) the possible outcomes of Nixon’s visits to China and the USSR. After about two weeks, when such outcomes were established, some of the participants were asked to recall what they had answered before. Others were asked after 3-6 months. The results were clear: most people, especially from the latter group, tended to overestimate their assigned probability for events that indeed happened in the meanwhile. Most also underestimated their forecast for events that didn’t happen (curiously, less so for the people that waited the longest).

This phenomenon is so prevalent that even informing posterior studies’ participants of its existence, answers were still contaminated by the hindsight bias (Fischhoff, 1976; Pohl and Hell, 1996; Bernstein et al., 2011). But what makes us prone to such a deviation from rationality? Some argue that our memories are distorted by new states of the world (that is, after some event occurs). Others, that our reconstruction of our previously naïve selves is imperfect. There are even those that blame our desire, not necessarily conscious, to be considered smarter than we are and maintain a sense of self-worth [2].

Our view of the world is so fundamentally altered when we are exposed to new knowledge that we can’t manage – or at least there’s plenty of evidence that it’s hard – to put ourselves into the shoes of our past selves. The hindsight bias is a statement about our inability to simulate ignorance. The problem that arises is that we may severely overestimate the past probabilities of events that already happened, especially when we didn’t have to estimate any prior likelihoods.

The classic example would be elections. Everyone was sure of the result for months once it comes out. It’s amazing. People get the spotlight on TV to make the most straightforward causal relationships. The reasons are clear, and everyone now understands the result. “I knew it all along” is the go-to sentence. But did they?

This phenomenon is called, quite mockingly, creeping determinism. The truth is that the occurrence of certain events does not change their previous probabilities. If a die gives you six, you wouldn’t go on national news to explain why. The probability was there all along. Of course, when it comes to elections, there are causal relations to be explored, but the very nature of uncertainty should make us careful when someone claims to have known something all along when a lot of reasonable people didn’t.

This search (the all-important search) for people that predicted (or claim they did) the result of elections or any other event is by itself, with no hindsight bias involved, inherently flawed. It impoverishes the debate by giving the spotlight to people that might have just gotten lucky. If a lot of people try to guess the outcome of a major event, it is reasonable to expect that at least some will get it right. Trying to find them, a posteriori, is just a statistical exercise to confirm this. That’s how lotteries work, and yet again we don’t see lottery-winners going on national news to teach you how to get rich! Add that to the fact that people are likely to believe they were right all along, and it becomes very hard to argue for the lessons of an unknown predictor brought to fame by a lucky guess.

In hindsight, this concept seems quite obvious, doesn’t it?

Rafael Pintro Schmitt

[1]

Click to access 13_Org_Behavior_and_Human_Perf_13_1975_Fischhoff.pdf

[2]

Click to access mahdavi17a.pdf

[3]

Click to access 099caseconferences.pdf

[4]

Further readings:

https://www.bbc.com/worklife/article/20190430-how-hindsight-bias-skews-your-judgement

https://en.wikipedia.org/wiki/Dunning%E2%80%93Kruger_effect

https://en.wikipedia.org/wiki/Bayesian_probability

https://www.britannica.com/topic/hindsight-bias

https://en.wikipedia.org/wiki/Hindsight_bias

Credit for featured image: https://www.shutterstock.com/es/g/Elenarts